I don’t go into Bryn Mawr’s Canaday Library as often as I used to, before the building of the wonderful Carpenter Library for archaeology, art history, and classics, but last week I found myself in Canaday’s main lobby to pick up a book from Interlibrary Loan. The lobby was busy. Students sat at long tables cluttered with books, scissors, glue, ribbons, and other craft supplies, and heaps of other books waited on carts. A librarian I know called me over to look and explained. The students were learning to fold books into hedgehogs, flowers, hearts, and other decorative shapes.

The books, my librarian friend assured me, had all been deaccessioned and were available online.

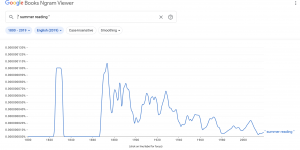

Here, I thought, is one of those moments when one can see the old order of knowledge giving way to the new. The books on the carts appeared to be mostly back issues of periodicals, which are indeed available online and therefore surplus to requirements at a modern academic library. To the undergraduate students especially, academic books—the physical, bound volumes—are beginning to be alien objects. In 2019, when last I taught a course at Bryn Mawr, I assigned a few books and had the bookstore stock them. Most of the students preferred to read them online, and the bound volumes languished in the bookstore. I had imagined a lost world, where “reading” for a course meant underlining, writing in margins (always in pencil, as one of my teachers advised me, so that you can eradicate stupidities when you’re older), taking notes in a notebook, and for much of my reading thumbing a dictionary and commentary beside the text that I was reading.

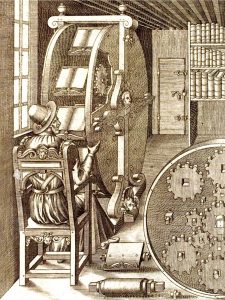

As I walked down Canaday’s front stairs, I thought of mummy cartonnage. In Egypt’s Ptolemaic and Roman periods, old papyrus documents, surplus to whatever the requirements of the day happened to be, were often torn into strips and converted to a kind of gesso-covered papier-mâché used to make mummy casings. When these cartonnages are dismantled, sometimes an important text, or parts of one, can be read—thus we know, for example, about Sappho’s poem on old age, and some new bits of Simonides. Classicists have to envision time in centuries and millennia, not the three or four decades that it’s taken to push into oblivion those books on the carts, waiting to be turned into hedgehogs. Will someone, someday a millennium or so from now, sit patiently unfolding a hedgehog hoping to recover what remains of volume 27 of Rheinisches Museum für Philologie?

~Lee T. Pearcy

March 29, 2024